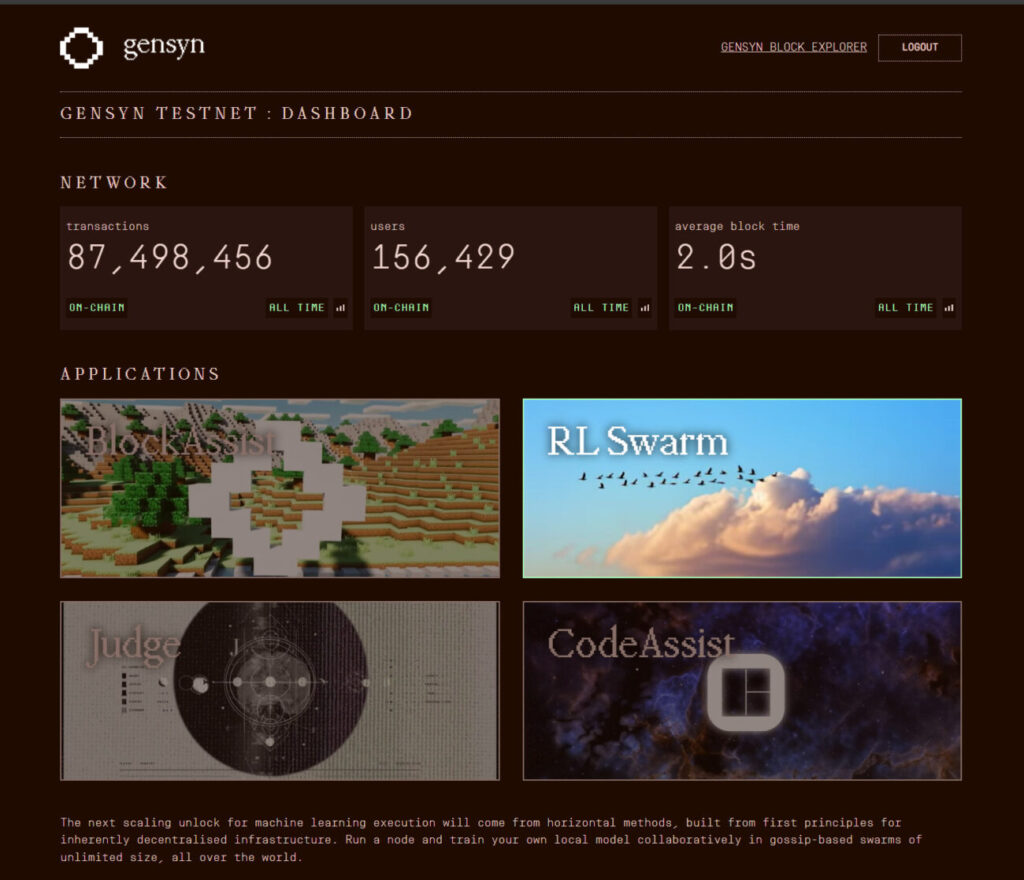

Gensyn is a machine learning protocol designed to change how AI is built and scaled. Rather than relying on massive, centralized GPU clusters, it harnesses idle computing power from personal laptops, home workstations, and cloud virtual machines, creating a global, open and permissionless network for AI.

The system is organized around four core layers that work together seamlessly:

Execution layer — runs machine learning tasks reliably across a wide range of hardware, from modest personal machines to high-powered data center GPUs.

Verification system — provides a trustless way to confirm that work from any participant is accurate and legitimate, preventing errors or cheating even in a decentralized network.

Peer-to-peer communication — allows devices around the world to coordinate, share data, and collaborate directly, without relying on a central server.

Decentralized coordination and identity layer — built on a custom blockchain using an Ethereum rollup, this layer manages participant identities, tracks contributions, assigns tasks and eventually supports incentives or payments in a transparent and auditable way.

By combining global computing resources, whether small home laptops or massive cloud GPUs, Gensyn eliminates the need for costly centralized infrastructure for AI training, fine-tuning, and other machine learning workloads. It democratizes access to AI compute, enabling researchers, hobbyists, developers, and everyday users to contribute computing power, run models, or build distributed AI systems.

Because the protocol is fully open-source and permissionless, anyone can join, lowering the barrier to entry for AI development and making participation truly accessible.

RL Swarm: Training AI Together Across the Globe

One of the first flagship applications developed on Gensyn is RL Swarm, a system designed to demonstrate the power of decentralized, collaborative machine learning by allowing multiple nodes to train and improve models together.

What RL Swarm Does

RL Swarm is a system that allows many independent nodes to work together on reinforcement learning training in a collaborative, peer-to-peer way. Each node runs its own local model, and together these models form a “swarm.” Rather than training in isolation, the models cooperate: they solve problems, review each other’s outputs, vote on the best responses, and update their models based on collective feedback.

This approach turns reinforcement learning from a solo, centralized process into a distributed, community-driven effort.

Because RL Swarm is open-source and permissionless under a liberal license, anyone can clone the code, run a node on a laptop, desktop, or GPU server, and join the global swarm.

Why RL Swarm Matters

Traditional reinforcement learning or large language model training often demands costly GPU clusters, complex scheduling, and centralized infrastructure, which limits participation to large labs and companies. RL Swarm changes that. It allows meaningful post-training tasks, such as fine-tuning, reasoning, and coding, to run across diverse, distributed hardware.

This approach brings several benefits:

- Lower barrier to entry: Researchers, hobbyists, and enthusiasts can participate without expensive infrastructure.

- Collective intelligence: Models learn by comparing outputs, critiquing peers, and reaching consensus, often speeding learning and improving generalization.

- Stronger decentralization: There is no central point of control, participation is open, and contributions are transparent.

In short, RL Swarm embodies Gensyn’s vision of a decentralized, inclusive, global AI compute network.

How RL Swarm Works Under the Hood

At the core of RL Swarm is GenRL, short for General Reinforcement Learning. This modular framework supports multi-agent and multi-stage reinforcement learning environments with decentralized coordination and communication. It gives developers control over environment design, reward logic, data, and interaction structures.

Key components that users define when running or building a swarm environment include:

- DataManager — organizes tasks or datasets for each round, whether reasoning problems, coding challenges, or custom tasks.

- RewardManager — sets how agents are rewarded based on correctness, formatting, logical validity, or any custom metric.

- Trainer — handles the learning process: generating outputs, interacting with the environment, and updating models based on rewards.

- GameManager — coordinates communication, data flow, and synchronization between nodes during each round.

A typical training round works like this: DataManager assigns tasks → nodes generate outputs → nodes share their results across the peer-to-peer network → agents critique and vote on peers’ outputs → rewards are evaluated → models update locally → the next round begins.

All of this takes place over the decentralized Gensyn network, where nodes connect using identities, communicate directly, and optionally log training progress on-chain or push updates to public model hubs. This setup makes RL Swarm highly flexible, allowing basic reasoning tasks, coding tasks, or fully customized reinforcement learning environments.

From Reasoning Problems to Coding Tasks

RL Swarm began with an environment called Reasoning Gym, where agents tackled math, logic, and reasoning problems. They solved problems, reviewed peers’ answers, voted, and updated their models—a proof-of-concept for distributed reinforcement learning.

In 2025, RL Swarm transitioned to CodeZero, a new environment focused on programming challenges. Here, nodes play different roles:

- Solvers — attempt coding tasks and learn through local reinforcement loops.

- Proposers — create new coding tasks and adjust their difficulty over time.

- Evaluators — frozen models that review submitted solutions, assess structure and correctness, and assign rewards without executing the code.

This role-based design creates a scalable, decentralized coding network. Tasks flow from proposers to solvers, solutions reach evaluators, and feedback guides continuous improvement.

By enabling collaborative code generation and debugging at scale, CodeZero demonstrates RL Swarm’s potential beyond reasoning tasks, showcasing practical applications for real-world programming and decentralized AI-assisted coding workflows.

What Participation Looks Like

Joining RL Swarm as a node is straightforward:

- Clone the RL Swarm repository, or pull the latest version if you already have it.

- Set up your environment, either through Docker or a direct installation depending on your operating system.

- Run your node. The system generates an on-chain identity using a wallet and a local peer identity file.

- Your node connects to the swarm, communicates with other peers, participates in tasks, and trains or updates a local model.

- Optionally, you can upload your updated model weights to a public model hub or link them via the Gensyn network, contributing to a shared, decentralized pool of models.

Even if you run only on a CPU, without a GPU, you can still participate. The design accommodates a variety of hardware, ensuring that everyone can contribute, no matter their setup.

BlockAssist: AI That Learns by Playing With You

While RL Swarm emphasizes collaboration among machines, BlockAssist takes a complementary approach by learning directly from human behavior, observing how users interact with a game environment to gradually develop personalized, adaptive AI assistance.

What BlockAssist Is

BlockAssist is an AI assistant built inside a game environment, currently using Minecraft as an example. You play the game normally—placing blocks, building structures, solving tasks—while the AI observes every action: where you place blocks, how you move, and how you build. The assistant starts with only basic knowledge of the game commands.

As you play, BlockAssist collects data on your behavior. After each session, it trains a machine learning model locally using your recorded gameplay. Over time, the assistant becomes smarter, gradually learning to anticipate your needs and act as a helpful collaborator rather than a blank-slate bot.

BlockAssist runs entirely on your device. It is open-source and permissionless, so you don’t need a powerful GPU or specialized infrastructure to start.

Why BlockAssist Matters

BlockAssist demonstrates a paradigm called assistance learning, where agents learn directly from human actions instead of relying on manually labeled rewards or curated datasets. This approach works particularly well in situations where defining ideal reward signals or collecting large datasets is difficult. Human behavior becomes the training data.

Because BlockAssist runs locally without centralized servers, it aligns perfectly with Gensyn’s vision of decentralized AI. Players maintain control, and personal AI assistants can learn directly from you on your own device.

For hobbyists, gamers, or casual users, BlockAssist is easy to use—you do not need machine learning expertise. Just play, and the AI learns from your actions. For researchers and developers, it provides a way to study human-aligned AI in a decentralized, user-centric environment without heavy infrastructure or massive datasets.

How BlockAssist Works in Practice

At the start of a session, the AI has minimal competence and only understands basic game commands. As you play, it records your actions, including block placement, movement, building sequences, and decisions. It does not know your ultimate goal, such as constructing a castle.

Using a combination of prediction models, like neural networks, and search techniques, such as Monte Carlo Tree Search, the AI tries to infer your likely goals and anticipates your next actions. After the session ends, the recorded actions become training data for local model updates, improving the AI for the next session.

Optionally, you can share the trained model to a repository or log it on the Gensyn network, contributing to a decentralized shared AI ecosystem. Over repeated sessions, the AI adapts to your style, habits, and preferences, gradually becoming a personalized helper.

Because BlockAssist is open and permissionless, many independent users can contribute to the broader AI design, improving the system collectively without central control.

How RL Swarm and BlockAssist Fit Into Gensyn’s Bigger Architecture

Gensyn supports both RL Swarm and BlockAssist, showing how its protocol and infrastructure can handle diverse machine learning workloads while maintaining decentralization, openness, and user control.

RL Swarm focuses on collaborative learning among machines. Multiple nodes interact, share outputs, and learn from each other to train shared models. BlockAssist focuses on human-centric learning, letting agents learn directly from individual human behavior on-device without central servers.

Both systems rely on Gensyn’s core architecture: the execution layer, peer-to-peer communication, trustless verification, and decentralized coordination and identity. This structure allows the network to support a wide range of applications—from large-scale distributed reinforcement learning to personal AI assistants, from reasoning tasks to code generation to game-based assistants.

Over time, this approach could create a full ecosystem of AI applications—many small, personal, and collaborative—all built on the same decentralized foundation.

Instead of the current model of monolithic AI hosted by a few providers, this network offers decentralization, permissionless access, and personal control over compute and data.

How You Can Get Involved

If you are curious about Gensyn or want to try it for yourself, there are several concrete ways to participate today:

- Run a local RL Swarm node. Whether you have a laptop, desktop, or GPU server, you can clone the RL Swarm repository, install the necessary dependencies via Docker or directly, set up your on-chain identity, and join the swarm. Your node contributes compute to machine learning tasks, trains a local model, and may help the global swarm become smarter over time.

- Try BlockAssist. If you enjoy sandbox or building games, you can use BlockAssist. Simply play the game while the assistant observes, learns, and evolves alongside you. No deep machine learning knowledge is required—just interact naturally and watch the AI adapt.

- Experiment with custom environments. Using GenRL, you can create your own reinforcement machine learning environment with custom tasks and rewards and launch a small swarm. This could include reasoning challenges, simulations, optimization problems, or any scenario you design.

- Follow the ecosystem. Gensyn is growing and evolving. Over time, new applications are likely to appear, from coding assistants and evaluation frameworks to crowd-powered model training and inference networks. As more participants join, the diversity and capability of models on Gensyn will expand.

Challenges and Limitations

While RL Swarm, BlockAssist, and the broader Gensyn network represent a powerful vision, there are important limitations to consider:

- Hardware differences and performance variation. Nodes can range from laptops to GPU servers. CPU-only machines will train more slowly, which can make performance across the network unpredictable.

- Reliability and verification complexity. Ensuring distributed machine learning tasks run correctly on diverse hardware is challenging. Differences in floating point calculations and compute behavior can cause non-deterministic results. Gensyn’s verification layer addresses this, but decentralized validation is inherently complex.

- Training stability and reward design. Designing effective reward systems, managing peer critiques, avoiding feedback loops, and ensuring convergence in distributed reinforcement learning are difficult problems. Real-world tasks, like coding or human-behavior learning, are even more challenging than toy examples.

- Privacy and data control. BlockAssist and similar applications that learn from your personal behavior raise privacy considerations. While local execution protects data, sharing or uploading models involves trade-offs that users should understand.

- Experimental status. RL Swarm, BlockAssist, and the Gensyn network are still relatively new. Bugs, unexpected behavior, evolving APIs, and limited documentation are normal in this bleeding-edge environment.

- Dependence on community participation. Decentralized AI networks require enough participants contributing compute, diversity, and tasks. Limited participation or predominantly CPU-only nodes could slow progress and scalability.

Why Gensyn Matters for the Future of AI

Gensyn points toward a potential shift in how AI is built, trained, and deployed:

- Democratization of AI compute. Anyone can contribute compute, run models, or build AI applications. Whether you are a hobbyist with a laptop or a researcher with a GPU cluster, Gensyn lowers the barrier to entry, fostering broader innovation and diversity in AI development.

- Decentralized and transparent AI development. By combining identity, verification, coordination, and eventually incentives on a public blockchain, AI development becomes auditable, community-driven, and open, in contrast to opaque systems controlled by a few large organizations.

- Hybrid AI applications. Gensyn supports both collaborative reinforcement learning across nodes and personalized assistance learning from individual behavior. This versatility enables a range of applications, from distributed research and crowd-trained models to personal AI assistants and hybrid systems that combine global knowledge with individual preferences.

- Resilience and scalability by design. Peer-to-peer communication, distributed compute, verification, and decentralized identity make the network robust. There is no single point of failure, and as more devices join, compute capacity scales naturally.

- Opening AI to new communities. People without machine learning expertise or access to high-end GPUs can still contribute meaningfully. Running a node, joining a swarm, or playing a game that trains an AI assistant allows participation from a wide variety of users, including those in underrepresented regions.

Together, these factors suggest that Gensyn could shift AI from a closed, centralized, and expensive domain to an open, inclusive, flexible, and community-driven ecosystem.

Editor’s Note:

This article was originally published on August 12th and has since been refined multiple times to reflect new developments, improvements, and clarifications.